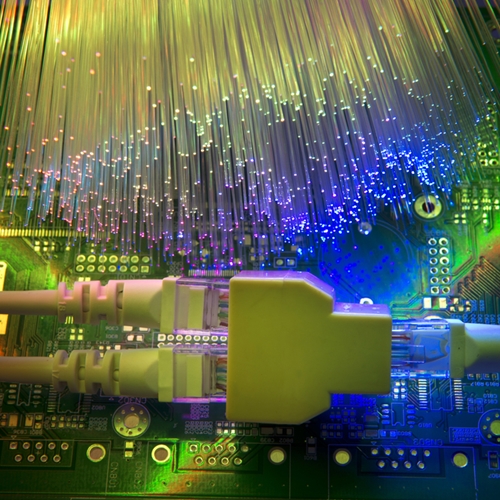

In August, researchers from the Massachusetts Institute of Technology will be presenting a paper on a new network management system they’ve dubbed Fastpass, which allows for “no-wait data centers.” At the annual conference for the ACM Special Interest Group on Data Communication, the researchers will describe how they have been able to reduce the queue length for network transmission by over 99 percent.

As opposed to a standard decentralized configuration, the Fastpass system employs a centralized data center model utilizing an arbiter that makes all routing choices. The arbiter analyzes network traffic holistically and makes decisions about routing based on the results. In experimental testing, researchers showed that a single 8-core arbiter machine could handle over 2 terabytes of data per second. Based on testing, they believe they could scale to a network of as many as 1,000 switches.

MIT was allowed to do testing in cooperation with Facebook and, making use of one of their facilities, were able to show reductions in latency that practically eliminated the normal queue. The report stated that, even in heaviest traffic conditions, average request latency dropped from 3.65 microseconds to 0.23 microseconds, over a 95 percent reduction.

The Fastpass system utilizes a new way of divvying up the processing power necessary to calculate transmission timings among multiple cores. The system essentially organizes workloads by time slot and schedules requests to whichever server is free first. All other work is passed on to the next core, which operates in the same way. Researchers admit that the system seems counterintuitive, but proved that even with the necessary lag to send requests through the arbiter, Fastpass dramatically improved overall network performance.

In the long run, MIT believes the system could be put to use in rapid deploy data centers to create highly-scalable, centralized systems that will deliver faster, more efficient and less expensive networking models.